x = nn.soft_plus(x) The Softplus activation Swish–Sigmoid Linear Unit( SiLU) The Softplus activation returns values as zero and above. The difference is that tanh converges exponentially while Soft sign converges polynomially. It is similar to the hyperbolic tangent activation function– tanh. The Soft sign activation function caps values between -1 and 1. GLU helps tackle the vanishing gradient problem. In the formula, the b gate controls what information is passed to the next layer. It has been applied in Gated CNNs for natural language processing. GLU is computed as GLU (a ,b )=a ⊗σ (b ). x = nn.gelu(x) GLU – Gated linear unit activation

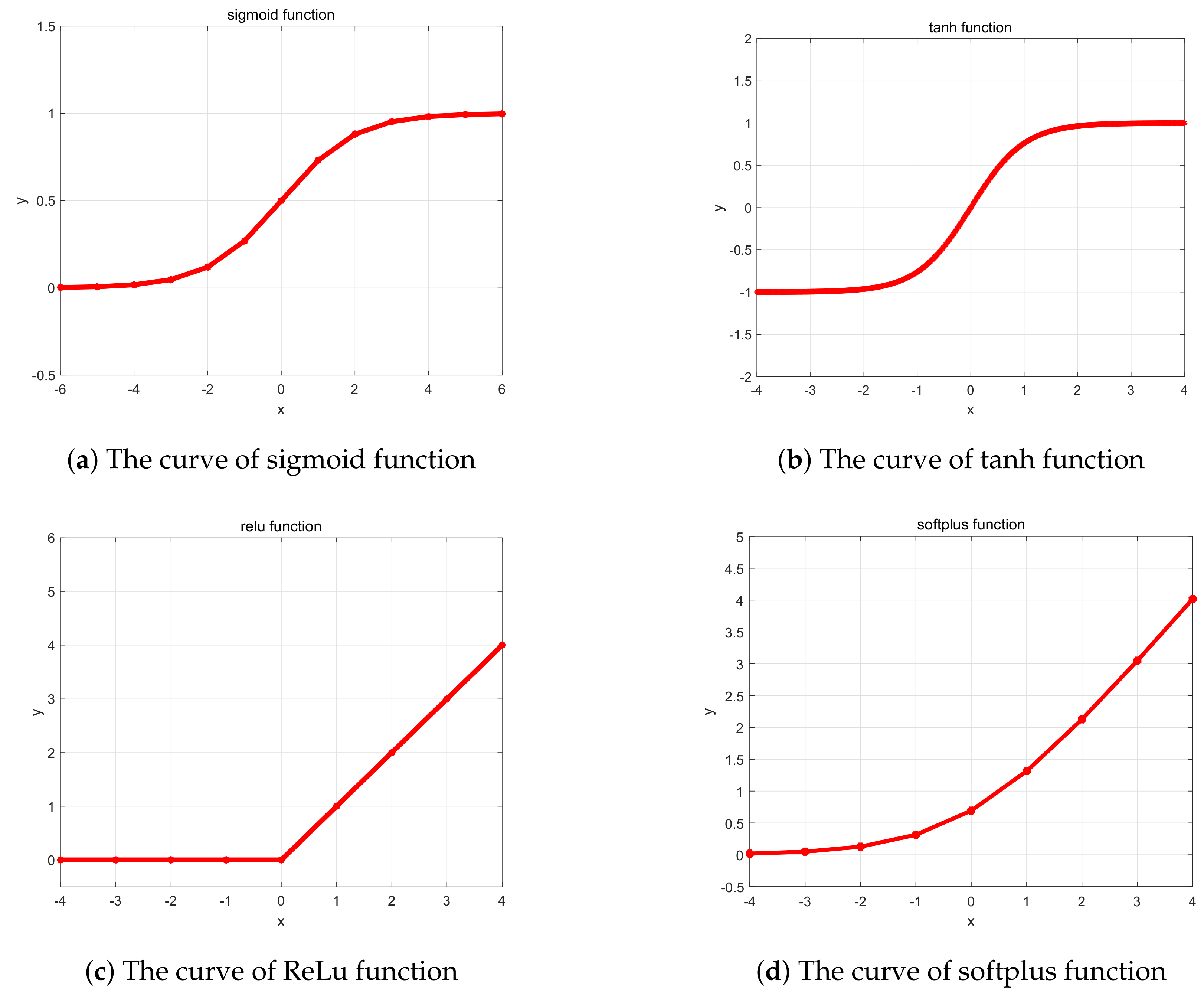

GELU non-linearity weights inputs by their value rather than gates inputs by their sign as in ReLU– Source. x = nn.celu(x) GELU– Gaussian error linear unit activation x = nn.elu(x) CELU – Continuously-differentiable exponential linear unitĬELU is ELU that is continuously differentiable. ELUs may lead to faster training and better generalization in networks with more than five layers.įor values above zero the number is returned as is but for numbers below zeros they are a number that is less that but close to zero. Unlike ReLu, ELU allows negative numbers pushing the mean unit activations closer to zero. x = nn.log_softmax(x) ELU – Exponential linear unit activationĮLU activation function helps in solving the vanishing and exploding gradients problem. Log softmax computes the logarithm of the softmax function, which rescales elements to the range −∞ to 0. Use the softmax activation when there is only one correct answer. For example, a picture is either grayscale or color. The softmax activation function is a variant of the sigmoid function used in multi-class problems where labels are mutually exclusive. Log sigmoid computes the log of the sigmoid activation, and its output is within the range of −∞ to 0. Use the sigmoid function when there is more than one correct answer. Just because there is a car in the image doesn’t mean a tree can’t be in the picture. For example, an image can have a car, a building, a tree, etc. Sigmoid is used where the classes are non-exclusive. The sigmoid activation function caps output to a number between 0 and 1 and is mainly used for binary classification tasks. It works by introducing a– a learnable parameter. Parametric Rectified Linear Unit is ReLU with extra parameters equal to the number of channels. Return x PReLU– Parametric Rectified Linear Unit On line 9 we apply the ReLu activation function after the convolution layer. This ensures that there are no negative numbers in the network. Outputs below zero are returned as zero, while numbers above zero are returned as they are. The function caps all outputs to zero and above. The ReLU activation function is primarily used in the hidden layers of neural networks to ensure non-linearity. Let's look at common activation functions in JAX and Flax. You should, therefore, choose an appropriate activation function for the problem being solved. However, in a regression problem, you want the numerical prediction of a quantity, for example, the price of an item. This indicates the probability of an item belonging to either of the two classes. For instance, when solving a binary classification problem, the outcome should be a number between 0 and 1. The activations functions cap the output within a specific range. Activation functions are applied in neural networks to ensure that the network outputs the desired result.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed